There’s a particular kind of SEO anxiety that arrives about three weeks after a major Google update. Rankings have moved. Traffic graphs have changed shape. The post-mortems begin: what happened, who was affected, was it the helpful content system, was it the core update, was it something more specific. By the time the analysis is complete and the strategy adjustments are made, weeks have passed and the competitive landscape has already reshuffled.

This cycle — update happens, analysis follows, response lags — is normal. It’s also increasingly insufficient, as Google’s update cadence has accelerated and the AI-powered search layer introduces a new set of changes that operate on different timelines and through different mechanisms than traditional algorithm updates.

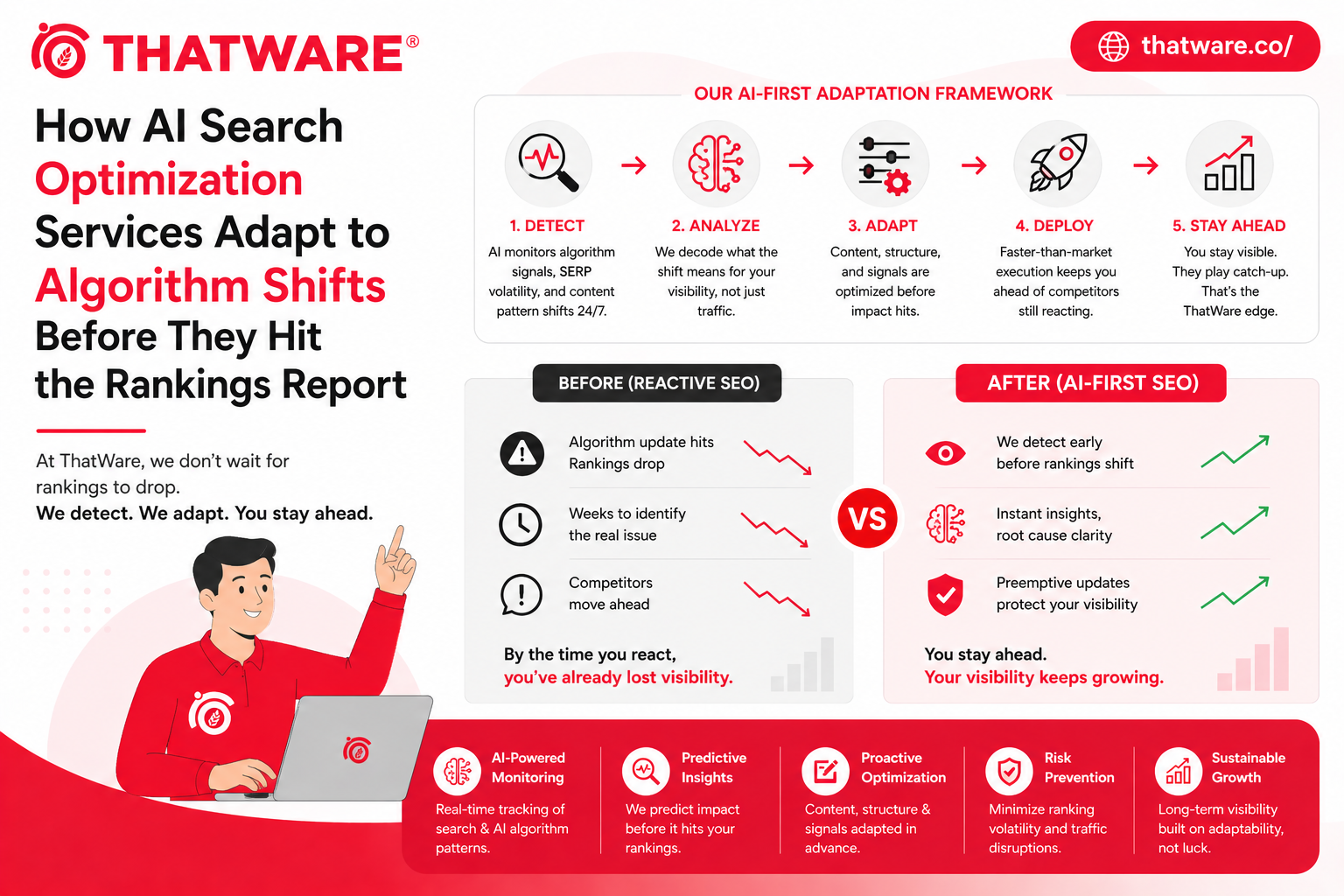

AI search optimization services are built to close the lag between change and response. Not by predicting the unpredictable, but by building the kind of structural content and authority foundation that responds positively to AI-driven changes rather than being caught by them.

Why Traditional Update Response Is Getting More Expensive

The reactive SEO cycle has always had costs. Ranking losses take time to recover from. Competitive positions lost during a response lag are sometimes permanently captured by competitors who were better positioned. Analysis and re-optimization consume resources.

Those costs are increasing as search evolves. Google’s core updates are more complex, affecting more ranking factors simultaneously. AI Overviews introduce a new visibility layer where position isn’t the only measure of performance. LLM-powered search tools operate on training cycles and content evaluation systems that are different from Google’s traditional crawl-and-index system.

Catching up after each change has become progressively more expensive, while the returns on reactive optimization are declining as the baseline quality bar rises. The economics are pushing toward proactive positioning.

The Structural Foundation That Absorbs Change

Ai search optimization services that work well focus primarily on building the structural foundation that performs consistently across algorithm changes rather than optimizing for specific current-state ranking factors.

What this foundation looks like, concretely: genuinely comprehensive topical coverage that establishes real authority rather than keyword targeting across a topic area. Clean technical infrastructure that doesn’t create indexation, crawlability, or rendering problems. Strong entity definition that ensures AI systems have a clear, accurate understanding of what the brand is and what it knows. Content that satisfies user intent at depth — addressing the full scope of what searchers in a given query context actually need, not just the primary question.

Content built on this foundation tends to respond positively to quality-focused updates because it was already optimized for what those updates are trying to reward. Technical work done to this standard tends to be resilient to crawl-related changes. Entity authority built deliberately tends to compound with each AI search system update rather than being disrupted by it.

This isn’t magic. It’s the difference between building toward a direction and building toward a specific current state. When the specific current state changes, direction-based investment holds up better.

Monitoring AI Search Visibility in Real Time

One of the practical capabilities that distinguishes sophisticated AI search optimization from conventional SEO is the monitoring infrastructure. Traditional SEO monitoring tracks keyword rankings, traffic volumes, and technical health metrics. These are necessary but not sufficient for AI search visibility.

Aieo services include monitoring systems specifically designed to track how AI systems are representing and citing brands. This involves regular systematic testing of how major AI platforms — ChatGPT, Perplexity, Google AI Overviews, and category-specific AI tools — respond to queries relevant to the brand. It includes tracking whether responses are accurate, whether the brand is being cited versus competitors, and whether the characterization aligns with what the brand wants to communicate.

This monitoring isn’t yet fully automated in the way keyword rank tracking is — the tooling is still maturing. But the brands building systematic manual and semi-automated AI visibility monitoring are creating an early warning system that lets them respond to AI search changes faster and more specifically than competitors operating without it.

When AI systems begin characterizing a brand inaccurately, or when a competitor starts appearing in AI answers that should include the brand, those are optimization signals that require different responses than a traditional ranking drop. Catching them early and responding specifically is a competitive advantage.

Content Freshness and the AI Update Cycle

One dimension of AI search optimization that differs from traditional SEO is the relationship with content freshness. Traditional search rewards content freshness in specific ways — recent publication date for news-type queries, regular updates for time-sensitive content. AI search has a different freshness dynamic.

Language models are trained on snapshots of the web. The information they “know” reflects their training data at a specific point in time, and their knowledge of your brand and your content reflects what was available and well-represented during that training period. Continuous publishing of fresh, authoritative content ensures that the most recent training cycles have current, accurate information to draw from.

This makes consistent content production a more direct investment in AI visibility than it might appear — not just because fresh content ranks in traditional search, but because it’s the raw material that shapes how AI systems understand and represent your brand over time. Brands that stop publishing, or that let their content age without updates, are potentially being represented by outdated information in AI systems long after they’ve updated their traditional web presence.

Adapting When AI Search Changes

When significant changes do occur — a new AI Overview format, a change in how Perplexity sources content, a shift in how ChatGPT weights different types of sources — the brands that adapt most effectively tend to share a few characteristics.

They have the monitoring infrastructure to detect changes quickly rather than learning about them from traffic drops. They have content that’s architecturally flexible — not built around a single specific format or assumption that a system change can invalidate. They have relationships with the underlying principles of what AI systems are trying to accomplish (serve users with accurate, comprehensive, trustworthy information) rather than just the current mechanical implementation.

The last point is probably the most important. AI search systems, whatever specific changes they undergo, are consistently oriented toward the same underlying goal: getting users accurate, useful answers from credible sources. Content and brand authority built genuinely toward that goal has an alignment with the direction of AI search development that reactive optimization can’t replicate. Change-resilience, ultimately, comes from being built for what the systems are trying to do — not just for what they’re currently measuring.

Tags: Ai search optimization services